If Cyber Maturity is more Standardized and Consistent, What Can this Enable?

Cybersecurity professionals don’t struggle to find maturity models. We struggle with the downstream effects of inconsistent semantics, inconsistent scoring, and inconsistent mapping:

- One site reports “Tier 3” (NIST CSF), another reports “MIL2” (C2M2), a third reports “Level 3” (CMMI-like), and leadership asks for benchmarking and an investment plan.

- Assessors interpret “repeatable,” “managed,” and “adaptive” differently across business units and geographies.

- Programs optimize for the score instead of optimizing for operational risk reduction.

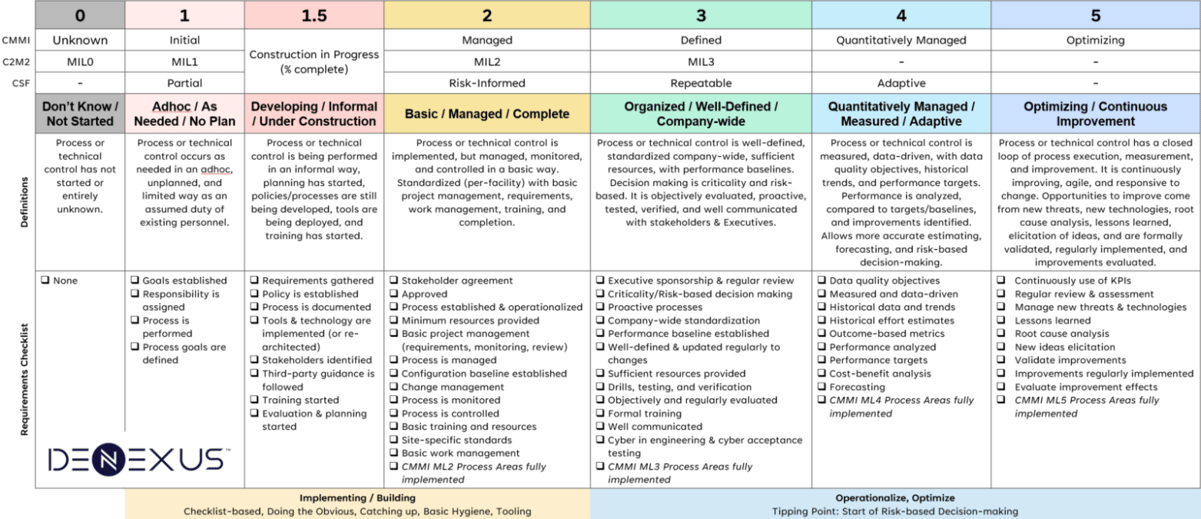

For years, I’ve been harmonizing and reconciling different cybersecurity maturity models and this year decided to put that information into the public domain. Not invent a 4th maturity model, but harmonize the existing ones so they can be translated (the way NIST CSF is the common language between other cyber standards.

I’m calling it the OT Cyber Maturity Journey (OT CMJ) approach that provides a harmonized maturity journey, including a pragmatic “Developing” (1.5) stage and an explicit cumulative rule (no rounding up) to improve repeatability and reduce score inflation. Triggered by an ICS/OT use-case, but if you look closely it applies to any environment that uses CMMI, C2M2, NIST CSF, 62443-2-4, etc.

What follows are concrete use-cases unlocked by having a maturity scale that is more standardized (shared definitions), more consistent (cross-walked), and more defensible (requirements-based and cumulative).

- Use-case 1: Translation + benchmarking across different maturity frameworks

- Use-case 2: Risk modeling and cyber risk quantification using maturity-derived effectiveness coefficients

- Use-case 3: Standardization to reduce maturity-model proliferation

- Use-case 4: Maturity target setting and multi-year OT cyber roadmaps

- Use-case 5: Assessment repeatability

- Use-case 6: Supply Chain and Service Providers

- Use-case 7: Ransomware resilience planning

- Use-case 8: Community baselines and sector profiles

Details below.

Use-case 1: Translation + benchmarking across different maturity frameworks

Problem: Benchmarking is meaningless if maturity scales are not comparable. Even within the same framework, organizations often create local scoring rubrics, which defeats peer comparison.

How Harmonized Maturity Model helps: OT CMJ functions as a translation layer between commonly used public-domain models (e.g., CSF tiers, C2M2 MILs, CMMI semantics), enabling apples-to-apples comparison across sites, subsidiaries, and partners. The NIST Cyber Security Framework was intended to be this translation layer between different cybersecurity standards, and the OT CMJ can be the translation for maturity.

Implementation:

- Assess internal maturity at the capability/domain level using OT CMJ definitions/requirements.

- Convert results to a “native” view for stakeholders (e.g., CSF, C2M2) using the crosswalk.

- Benchmark internally (site-to-site), then externally (peer group) once an industry group adopts consistent scoring rules.

Use-case 2: Risk modeling and cyber risk quantification using maturity-derived effectiveness coefficients

Problem: OT teams often can’t translate “maturity improvement” into risk reduction in a way ERM, finance, or insurers can consume.

How Harmonized Maturity Model helps: OT CMJ enables consistent assignment of control strength / effectiveness assumptions by maturity level, which can feed quantitative or semi-quantitative models. NIST explicitly frames cybersecurity as an input into enterprise risk decisions and emphasizes integration with ERM processes. (NIST Publications)

Two practical approaches:

- Coefficient mapping (fast): Assign effectiveness coefficients to each maturity level for a given capability (e.g., remote access control, backup/restore, incident response). Coefficients should be capability-specific (IR maturity does not equal vuln mgmt maturity).

- FAIR-style control analytics (rigorous): FAIR and FAIR-CAM focus on modeling risk as loss event frequency and magnitude, and include constructs for evaluating how controls reduce risk. This gives you a disciplined way to justify why a maturity move changes risk assumptions. (The Open Group)

Implementation:

- Select the top loss scenarios (e.g., ransomware-induced outage, unsafe operating condition, quality loss, environmental release).

- Identify which OT cyber capabilities materially influence scenario frequency and/or magnitude.

- Use OT CMJ maturity to justify control strength assumptions used in the model.

- Recalculate risk deltas for proposed initiatives (Level 1.5 → 2, 2 → 3, etc.).

Why it matters: If maturity scoring is inconsistent, your risk quantification inputs become arbitrary and the model loses credibility. Also, if the intent is to assign ‘effectiveness coefficients’ for each maturity level (e.g., L2-Basic is 50% effective) then consistency becomes even more important. Then as more data is available to support the effectiveness values, the coefficients can be adjusted slightly.

Use-case 3: Standardization to reduce maturity-model proliferation

And create a starting point for the next iteration staying in the public domain)

Problem: Security standards and frameworks keep reinventing maturity models, or consultancies suggest their own proprietary version in their reporting. This fragments the community and reduces comparability.

How Harmonized Maturity Model helps: A shared baseline encourages the industry to evolve from “new model creation” to “improve the common model,” including:

- better evidence rules,

- negative indicators/anti-patterns,

- more granular domain-level requirements,

- mapping to widely used control catalogs.

This aligns with how NIST CSF positions itself: a common taxonomy for communication, profiles for tailoring, and informative references for mapping across standards. (NIST Publications)

Use-case 4: Maturity target setting and multi-year OT cyber roadmaps

Problem: “Raise maturity everywhere” is not a strategy—especially in OT, where constraints (uptime, safety, vendor support, lifecycle) matter.

How Harmonized Maturity Model helps: You can set target maturity by criticality and build an investment roadmap that is defensible and measurable. This matches the stated intent of C2M2: measure capabilities over time, set target maturity based on risk, and prioritize actions/investments to meet targets. (The Department of Energy's Energy.gov)

Implementation:

- Self-assess your maturity level, for each function, category, subcategory in your chosen framework or standard (e.g., NIST CSF, C2M2, 62443).

- Identify gaps in your implementation, and use the OT CMJ requirements to help determine what’s next. This is the value that Consultants provide, to help assess and recommend the next stage in your cybersecurity maturity journey.

- Map baseline practices (e.g., account security, asset inventory, vulnerability management) to OT CMJ Level 2 (“Basic”) requirements.

- Define Level 3–5 “what’s next” requirements (e.g., org-wide standardization, measured outcomes, continuous improvement).

- Transform these requirements into a sequence, project plan, schedule, and budget across multiple years.

OT-specific nuance: “Target maturity” often differs by architecture zone (e.g., DMZ vs cell/area zone vs SIS zone). Use OT CMJ as the maturity dimension; use your architecture zoning model and criticality as the scope dimension.

Use-case 5: Assessment repeatability

Problem: Many maturity assessments are workshop-driven and drift over time. The same program assessed by two teams yields two scores.

How Harmonized Maturity Model helps: OT CMJ’s requirements-based, cumulative structure supports more defensible scoring.

Where to take this next (and why it matters):

- Borrow the idea of negative indicators from other assurance models. For example, the UK Cyber Assessment Framework (CAF) uses “not achieved” indicators that are intended to be strong signals that an outcome is not met. (UK Government Security - Beta)

- The Australia AESCSF explicitly uses Anti-Patterns; if present, they prevent achieving the associated maturity measure. (AEMO)

Implementation:

- Reference CMMI, NIST CSF, others for more detailed requirements. Use them to define evidence expectations per maturity level (documents, configs, logs, training records, test results) that align with the requirements in the harmonized maturity model.

- Define negative indicators that “cap” maturity (e.g., “restore tests not performed” caps recovery maturity at ≤2 regardless of documentation quality). In future iterations of the harmonized OT CMJ model, we intend to add these anti-patterns and negative indicators added.

Use-case 6: Supply Chain and Service Providers

Problem: OT dependencies (OEMs, integrators, MSPs, remote support) create risk, but supplier requirements are often vague (“follow best practices”) or overly IT-centric.

How Harmonized Maturity Model helps: Use OT CMJ to express minimum maturity expectations for third parties in contract language:

- their remote access,

- their patch/vulnerability program,

- their incident response, coordination and notification,

- their change approval workflows.

- And other aspects of their cybersecurity program.

NIST SP 800-161 Rev. 1 provides a structured approach for cybersecurity supply chain risk management (C‑SCRM), including strategy, plans, policies, and risk assessments—concepts OT CMJ can maturity-grade. (NIST Computer Security Resource Center)

The ISA/ISO/IEC 62443-2-4 standard focused on Security Program Requirements for ICS/OT Service Providers and is based upon a CMMI-like maturity model for measuring their capabilities. The maturity model in Part 2-4 can be replaced with OT CMJ.

Implementation:

- Leverage 62443-2-4 and SP800-161 to identify the capability levels of service providers, and use OT CMJ to set and measure their capability levels.

- Develop a self-assessment questionnaire for your suppliers and service providers to attest to their current capability. If they have to explicitly select checkboxes, it is going to be more accurate than using traditional maturity models.

- Create “supplier maturity profiles” aligned to service type (OEM remote support vs integrator vs SOC/MDR provider).

- Require evidence aligned to OT CMJ level claims.

Use-case 7: Ransomware resilience planning

Problem: Ransomware readiness tools exist, but organizations struggle to tie findings to long-term maturity roadmaps.

How Harmonized Maturity Model helps: Use OT CMJ maturity levels to structure ransomware resilience work into a progression:

- Level 1–2: basic containment and restore capability is established

- Level 3: verified, standardized recovery patterns, drills, and cross-functional playbooks

- Level 4–5: data-driven resilience metrics, continuous improvement loops

CISA’s ecosystem includes ransomware readiness assessment approaches (e.g., via CSET’s ransomware assessment options) designed to help organizations evaluate readiness. OT CMJ can provide the common maturity “spine” for prioritizing and tracking those improvements over time. (CISA)

Use-case 8: Community baselines and sector profiles

Problem: Individual organizations build maturity models and “target states” in isolation, then struggle to justify why their target is appropriate.

How Harmonized Maturity Model helps: Use OT CMJ to support community-driven baselining and target states, similar to NIST CSF Community Profiles—baseline outcomes created and published to address shared interests among organizations. (NIST)

Implementation:

- Establish an OT CMJ-based “community profile” for a subsector (e.g., midstream pipelines, municipal water, discrete manufacturing).

- DeNexus and cyber insurance companies happen to be in a unique position to aggregate maturity self-assessment data, anonymize, and develop industry peer averages for comparison against. As our customer-base grows, so does our peer baseline.

- Publish target maturity levels by criticality tier and capability.

- Enable benchmarking without forcing everyone into the same underlying framework.

Closing Thoughts

As you can tell, I’m passionate about cybersecurity maturity as I’ve used it throughout my customer-facing OT cyber consulting engagements the last 20+ years. I’ve seen the usefulness, the pitfalls, the evolution of maturity models and their application to cybersecurity.

Many would disagree that maturity levels are not technical enough - I would agree if you’re the technical engineer responsible for patching, hardening, and other front-line cybersecurity operations. But the terminology used on the front line doesn’t translate or well understood by Senior Management. Determining the maturity level of each cybersecurity function/category is close to the cyber insurer’s method for assessing cyber risk.

Maturity modelling of cybersecurity remains an important aspect of cyber risk management, helping remind ourselves it is a continuous journey of improvement, with guideposts provided by the maturity requirements as what we should be doing next.

Once again, these use-cases above are unlocked by having a maturity scale that is more standardized (shared definitions), more consistent (cross-walked), and more defensible (requirements-based and cumulative).